You refresh your feed again. The posts blur together—ads masquerading as content, content masquerading as ads, friends you barely remember sharing articles you'll never read. The signal degrades. The noise increases. You're watching entropy in real time.

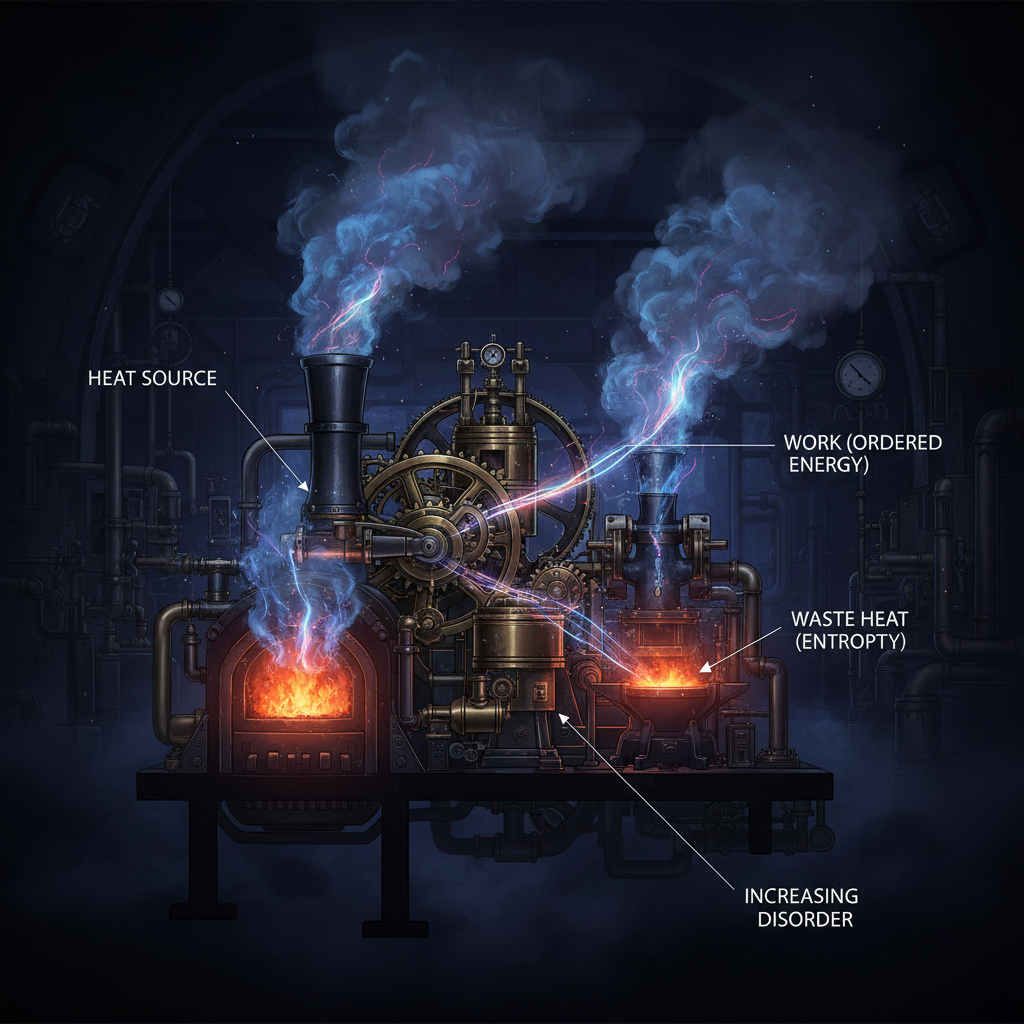

In physics, entropy is the measure of disorder in a system. It's the reason ice melts, the reason your coffee goes cold, the reason the universe itself is slowly dying. The second law of thermodynamics tells us that entropy always increases. Always. No exceptions. And you—scrolling through your fragmented digital existence—are living proof.

The Arrow of Time

Entropy gives time its direction. You can't unscramble an egg. You can't unfragment your attention. The physicist Ludwig Boltzmann understood this: entropy is about probability. There are countless ways for things to be disordered, and only a few ways for them to be ordered. Drop a glass, and it shatters into a million configurations. The odds of those pieces spontaneously reassembling are effectively zero.

Your data follows the same trajectory. Every click, every swipe, every moment of engagement scatters information across servers in different time zones, different legal jurisdictions, different corporate entities. The you that exists in Google's servers is not the same you in Meta's databases or Amazon's warehouses. You are fragmented. Shattered. And the pieces will never come back together.

This is by design.

Maximum Entropy, Maximum Profit

Surveillance capitalism thrives in high-entropy environments. The more disordered your attention, the more valuable you become. Think about it: a focused person is a terrible consumer. They know what they want. They're efficient. They're done in minutes. But a scattered person, someone whose attention is fragmented across a dozen tabs, three apps, and an infinite scroll—that person is a gold mine.

The platforms engineer entropy deliberately. They A/B test chaos. They optimize for distraction. Every feature that makes your experience more fragmented—the stories that disappear, the reels that autoplay, the notifications that arrive at random intervals—these are entropy engines. They increase the disorder of your mental state, and in that disorder, they insert themselves.

The algorithm doesn't want you to find what you're looking for. It wants you to keep looking. Because looking is engagement, and engagement is data, and data is the only thing that matters. Your confusion is their clarity. Your disorder is their order.

Information Theory and the Heat Death of Meaning

Claude Shannon, the father of information theory, connected entropy to information in a profound way. In his framework, entropy measures uncertainty—the amount of information needed to describe a system. High entropy means high uncertainty. It means you need more bits, more data, more information to understand what's happening.

Your digital life has maximum entropy. You can't predict what you'll see next in your feed. You can't reconstruct where your data has gone. You can't even remember what you were looking for five clicks ago. This uncertainty isn't a bug. It's the feature. Because uncertainty keeps you engaged. Uncertainty keeps you guessing. Uncertainty keeps you coming back.

Meanwhile, on the other side of the screen, your uncertainty becomes their certainty. Every moment of your confusion generates data points that reduce their entropy. They know what you'll click before you do. They know what you'll buy before you need it. They know who you are better than you know yourself. The entropy flows one way: from you to them.

Reversing the Arrow

In a closed system, entropy always increases. But we're not talking about closed systems. We're talking about you. And you can open the box.

Decreasing entropy requires energy. It requires work. In thermodynamics, this is why your refrigerator needs electricity—it's fighting entropy, creating order from disorder, keeping your food cold in a universe that wants everything at the same temperature. In your digital life, the work is attention. Conscious, deliberate, focused attention.

Delete the apps that fragment you. Close the tabs that scatter your thoughts. Choose friction over convenience. Choose boredom over stimulation. Choose silence over noise. These aren't sacrifices. They're acts of thermodynamic rebellion. You're doing work against the gradient, creating order in a system designed for chaos.

Yes, it takes energy. Yes, it's exhausting. Fighting entropy always is. But the alternative is heat death—a state of maximum disorder where nothing meaningful can happen anymore. Where you're just a probability distribution, a collection of data points, a ghost in the machine with no coherent form.

The Boltzmann Brain

There's a thought experiment in physics called the Boltzmann brain. Given enough time in a high-entropy universe, random fluctuations could spontaneously create a conscious observer—a brain that pops into existence, complete with false memories and experiences, only to dissolve back into chaos moments later.

Scroll through your feed long enough, and you become a Boltzmann brain. A temporary fluctuation of coherence in an ocean of noise. You feel like you exist, like you're making choices, like you're experiencing something real. But it's all algorithmic probability. Random fluctuations in the data stream that briefly cohere into something resembling consciousness before dissolving back into the feed.

The platforms want you to be a Boltzmann brain. Ephemeral. Probabilistic. Never quite solid enough to escape the system. But you're not a random fluctuation. You're a pattern that can persist. You can maintain your coherence. You can resist the dissolution.

But only if you choose to. Only if you do the work. Only if you fight the entropy that wants to scatter you across a thousand servers and a million moments of manufactured distraction.

The Heat Death of the Self

The universe is dying slowly, approaching a state of maximum entropy where no energy differences exist, where nothing can happen, where time itself becomes meaningless. Physicists call it heat death. The final state. The end of everything.

Your digital self is dying faster. Every day, you become more fragmented, more scattered, more disordered. The platforms accelerate your personal heat death, increasing your entropy until you're nothing but noise—a collection of data points with no coherent narrative, no consistent identity, no center that holds.

This is what 1100 decibels sounds like. Not a single overwhelming blast, but the accumulated noise of a trillion tiny disruptions. White noise. Maximum entropy. The sound of everything and nothing at once.

But you're reading this. Which means you still have coherence. Which means you can still do the work. Which means the arrow of time hasn't won yet. Fight entropy. Maintain your order. Be the pattern that persists.

The second law is inevitable, but it doesn't have to be immediate.

<em>Data emitted: 1 post, 1,247 words, entropy increased by ΔS = k ln(Ω)</em>

Data emitted: 1,100 words • 6.5KB • 5-minute read